Reproducible Builds: Reproducible Builds in April 2023

Welcome to the April 2023 report from the Reproducible Builds project!

In these reports we outline the most important things that we have been up to over the past month. And, as always, if you are interested in contributing to the project, please visit our Contribute page on our website.

In these reports we outline the most important things that we have been up to over the past month. And, as always, if you are interested in contributing to the project, please visit our Contribute page on our website.

General news

Trisquel is a fully-free operating system building on the work of Ubuntu Linux. This month, Simon Josefsson published an article on his blog titled Trisquel is 42% Reproducible!. Simon wrote:

Trisquel is a fully-free operating system building on the work of Ubuntu Linux. This month, Simon Josefsson published an article on his blog titled Trisquel is 42% Reproducible!. Simon wrote:

The absolute number may not be impressive, but what I hope is at least a useful contribution is that there actually is a number on how much of Trisquel is reproducible. Hopefully this will inspire others to help improve the actual metric.

Simon wrote another blog post this month on a new tool to ensure that updates to Linux distribution archive metadata (eg. via apt-get update) will only use files that have been recorded in a globally immutable and tamper-resistant ledger. A similar solution exists for Arch Linux (called pacman-bintrans) which was announced in August 2021 where an archive of all issued signatures is publically accessible.

Joachim Breitner wrote an in-depth blog post on a bootstrap-capable GHC, the primary compiler for the Haskell programming language. As a quick background to what this is trying to solve, in order to generate a fully trustworthy compile chain, trustworthy root binaries are needed and a popular approach to address this problem is called bootstrappable builds where the core idea is to address previously-circular build dependencies by creating a new dependency path using simpler prerequisite versions of software. Joachim takes an somewhat recursive approach to the problem for Haskell, leading to the inadvertently humourous question: Can I turn all of GHC into one module, and compile that?

Elsewhere in the world of bootstrapping, Janneke Nieuwenhuizen and Ludovic Court s wrote a blog post on the GNU Guix blog announcing The Full-Source Bootstrap, specifically:

[ ] the third reduction of the Guix bootstrap binaries has now been merged in the main branch of Guix! If you run guix pull today, you get a package graph of more than 22,000 nodes rooted in a 357-byte program something that had never been achieved, to our knowledge, since the birth of Unix.

More info about this change is available on the post itself, including:

The full-source bootstrap was once deemed impossible. Yet, here we are, building the foundations of a GNU/Linux distro entirely from source, a long way towards the ideal that the Guix project has been aiming for from the start.

There are still some daunting tasks ahead. For example, what about the Linux kernel? The good news is that the bootstrappable community has grown a lot, from two people six years ago there are now around 100 people in the #bootstrappable IRC channel.

Michael Ablassmeier created a script called pypidiff as they were looking for a way to track differences between packages published on PyPI. According to Micahel, pypidiff uses diffoscope to create reports on the published releases and automatically pushes them to a GitHub repository. This can be seen on the pypi-diff GitHub page (example).

Eleuther AI, a non-profit AI research group, recently unveiled Pythia, a collection of 16 Large Language Model (LLMs) trained on public data in the same order designed specifically to facilitate scientific research. According to a post on MarkTechPost:

Eleuther AI, a non-profit AI research group, recently unveiled Pythia, a collection of 16 Large Language Model (LLMs) trained on public data in the same order designed specifically to facilitate scientific research. According to a post on MarkTechPost:

Pythia is the only publicly available model suite that includes models that were trained on the same data in the same order [and] all the corresponding data and tools to download and replicate the exact training process are publicly released to facilitate further research.

These properties are intended to allow researchers to understand how gender bias (etc.) can affected by training data and model scale.

Back in February s report we reported on a series of changes to the Sphinx documentation generator that was initiated after attempts to get the alembic Debian package to build reproducibly. Although Chris Lamb was able to identify the source problem and provided a potential patch that might fix it, James Addison has taken the issue in hand, leading to a large amount of activity resulting in a proposed pull request that is waiting to be merged.

WireGuard is a popular Virtual Private Network (VPN) service that aims to be faster, simpler and leaner than other solutions to create secure connections between computing devices. According to a post on the WireGuard developer mailing list, the WireGuard Android app can now be built reproducibly so that its contents can be publicly verified. According to the post by Jason A. Donenfeld, the F-Droid project now does this verification by comparing their build of WireGuard to the build that the WireGuard project publishes. When they match, the new version becomes available. This is very positive news.

WireGuard is a popular Virtual Private Network (VPN) service that aims to be faster, simpler and leaner than other solutions to create secure connections between computing devices. According to a post on the WireGuard developer mailing list, the WireGuard Android app can now be built reproducibly so that its contents can be publicly verified. According to the post by Jason A. Donenfeld, the F-Droid project now does this verification by comparing their build of WireGuard to the build that the WireGuard project publishes. When they match, the new version becomes available. This is very positive news.

Author and public speaker, V. M. Brasseur published a sample chapter from her upcoming book on corporate open source strategy which is the topic of Software Bill of Materials (SBOM):

A software bill of materials (SBOM) is defined as a nested inventory for software, a list of ingredients that make up software components. When you receive a physical delivery of some sort, the bill of materials tells you what s inside the box. Similarly, when you use software created outside of your organisation, the SBOM tells you what s inside that software. The SBOM is a file that declares the software supply chain (SSC) for that specific piece of software. [ ]

Several distributions noticed recent versions of the Linux Kernel are no longer reproducible because the BPF Type Format (BTF) metadata is not generated in a deterministic way. This was discussed on the #reproducible-builds IRC channel, but no solution appears to be in sight for now.

Community news

On our mailing list this month:

-

Larry Doolittle shared an interesting puzzle with the group where three bytes in a

.zip file were different between two builds.

-

Alexis PM wrote a message as they had observed a difference between binaries available in the Debian archive and the ones on tests.reproducible-builds.org. The thread generated a number of replies, including interesting responses from Vagrant Cascadian [ ] and kpcyrd [ ].

Holger Levsen gave a talk at foss-north 2023 in Gothenburg, Sweden on the topic of Reproducible Builds, the first ten years.

Lastly, there were a number of updates to our website, including:

Holger Levsen gave a talk at foss-north 2023 in Gothenburg, Sweden on the topic of Reproducible Builds, the first ten years.

Lastly, there were a number of updates to our website, including:

-

Chris Lamb attempted a number of ways to try and fix literal

: .lead appearing in the page [ ][ ][ ], made all the Back to who is involved links italics [ ], and corrected the syntax of the _data/sponsors.yml file [ ].

-

Holger Levsen added his recent talk [ ], added Simon Josefsson, Mike Perry and Seth Schoen to the contributors page [ ][ ][ ], reworked the People page a little [ ] [ ], as well as fixed spelling of Arch Linux [ ].

Lastly, Mattia Rizzolo moved some old sponsors to a former section [ ] and Simon Josefsson added Trisquel GNU/Linux. [ ]

Debian

-

Vagrant Cascadian reported on the Debian s

build-essential package set, which was inspired by how close we are to making the Debian build-essential set reproducible and how important that set of packages are in general . Vagrant mentioned that: I have some progress, some hope, and I daresay, some fears . [ ]

-

Debian Developer Cyril Brulebois (kibi) filed a bug against snapshot.debian.org after they noticed that there are many missing

dinstalls that is to say, the snapshot service is not capturing 100% of all of historical states of the Debian archive. This is relevant to reproducibility because without the availability historical versions, it is becomes impossible to repeat a build at a future date in order to correlate checksums. .

-

20 reviews of Debian packages were added, 21 were updated and 5 were removed this month adding to our knowledge about identified issues. Chris Lamb added a new

build_path_in_line_annotations_added_by_ruby_ragel toolchain issue. [ ]

-

Mattia Rizzolo announced that the data for the stretch archive on tests.reproducible-builds.org has been archived. This matches the archival of stretch within Debian itself. This is of some historical interest, as stretch was the first Debian release regularly tested by the Reproducible Builds project.

Upstream patches

The Reproducible Builds project detects, dissects and attempts to fix as many currently-unreproducible packages as possible. We endeavour to send all of our patches upstream where appropriate. This month, we wrote a large number of such patches, including:

-

Bernhard M. Wiedemann:

ghc (workaround a parallelism-related issue)

-

Jan Zerebecki:

ghc (report a parallelism-related issue)

-

Chris Lamb:

- #1034147 filed against

ruby-regexp-parser.

-

Vagrant Cascadian:

- #1033954, #1033955 and #1033957 filed against

pike8.0.

- #1033958 and #1033959 filed against

binutils.

- #1034129 filed against

lomiri-action-api.

- #1034199 and #1034200 filed against

lomiri.

- #1034327 filed against

nmodl.

- #1034423 filed against

php8.2.

- #1034431 filed against

qemu.

- #1034499 filed against

twisted.

- #1034740 filed against

boost1.74.

- #1034892 filed against

php8.2.

- #1035324 filed against

shaderc.

- #1035329 and #1035331 filed against

jackd2.

diffoscope development

diffoscope version

diffoscope version 241 was uploaded to Debian unstable by Chris Lamb. It included contributions already covered in previous months as well a change by Chris Lamb to add a missing raise statement that was accidentally dropped in a previous commit. [ ]

Testing framework

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

-

Holger Levsen:

- Significant work on a new Documented Jenkins Maintenance (djm) script to support logged maintenance of nodes, etc. [ ][ ][ ][ ][ ][ ]

- Add the new APT repo url for Jenkins itself with a new signing key. [ ][ ]

- In the Jenkins shell monitor, allow 40 GiB of files for diffoscope for the Debian experimental distribution as Debian is frozen around the release at the moment. [ ]

- Updated Arch Linux testing to cleanup leftover files left in

/tmp/archlinux-ci/ after three days. [ ][ ][ ]

- Mark a number of nodes hosted by Oregon State University Open Source Lab (OSUOSL) as online and offline. [ ][ ][ ]

- Update the node health checks to detect failures to end

schroot sessions. [ ]

- Filter out another duplicate contributor from the contributor statistics. [ ]

-

Mattia Rizzolo:

- Code changes to properly archive the

stretch Debian distribution. [ ][ ][ ][ ]

- Introduce the archived suites configuration option. [ ]][ ]

- Fix the KGB bot configuration to support

pyyaml 6.0 as present in Debian bookworm. [ ]

If you are interested in contributing to the Reproducible Builds project, please visit our Contribute page on our website. However, you can get in touch with us via:

-

IRC:

#reproducible-builds on irc.oftc.net.

-

Twitter: @ReproBuilds

-

Mailing list:

rb-general@lists.reproducible-builds.org

guix pull today, you get a package graph of more than 22,000 nodes rooted in a 357-byte program something that had never been achieved, to our knowledge, since the birth of Unix.

#bootstrappable IRC channel.

-

Larry Doolittle shared an interesting puzzle with the group where three bytes in a

.zipfile were different between two builds. - Alexis PM wrote a message as they had observed a difference between binaries available in the Debian archive and the ones on tests.reproducible-builds.org. The thread generated a number of replies, including interesting responses from Vagrant Cascadian [ ] and kpcyrd [ ].

Holger Levsen gave a talk at foss-north 2023 in Gothenburg, Sweden on the topic of Reproducible Builds, the first ten years.

Lastly, there were a number of updates to our website, including:

Holger Levsen gave a talk at foss-north 2023 in Gothenburg, Sweden on the topic of Reproducible Builds, the first ten years.

Lastly, there were a number of updates to our website, including:

-

Chris Lamb attempted a number of ways to try and fix literal

: .leadappearing in the page [ ][ ][ ], made all the Back to who is involved links italics [ ], and corrected the syntax of the_data/sponsors.ymlfile [ ]. - Holger Levsen added his recent talk [ ], added Simon Josefsson, Mike Perry and Seth Schoen to the contributors page [ ][ ][ ], reworked the People page a little [ ] [ ], as well as fixed spelling of Arch Linux [ ].

Debian

-

Vagrant Cascadian reported on the Debian s

build-essential package set, which was inspired by how close we are to making the Debian build-essential set reproducible and how important that set of packages are in general . Vagrant mentioned that: I have some progress, some hope, and I daresay, some fears . [ ]

-

Debian Developer Cyril Brulebois (kibi) filed a bug against snapshot.debian.org after they noticed that there are many missing

dinstalls that is to say, the snapshot service is not capturing 100% of all of historical states of the Debian archive. This is relevant to reproducibility because without the availability historical versions, it is becomes impossible to repeat a build at a future date in order to correlate checksums. .

-

20 reviews of Debian packages were added, 21 were updated and 5 were removed this month adding to our knowledge about identified issues. Chris Lamb added a new

build_path_in_line_annotations_added_by_ruby_ragel toolchain issue. [ ]

-

Mattia Rizzolo announced that the data for the stretch archive on tests.reproducible-builds.org has been archived. This matches the archival of stretch within Debian itself. This is of some historical interest, as stretch was the first Debian release regularly tested by the Reproducible Builds project.

Upstream patches

The Reproducible Builds project detects, dissects and attempts to fix as many currently-unreproducible packages as possible. We endeavour to send all of our patches upstream where appropriate. This month, we wrote a large number of such patches, including:

-

Bernhard M. Wiedemann:

ghc (workaround a parallelism-related issue)

-

Jan Zerebecki:

ghc (report a parallelism-related issue)

-

Chris Lamb:

- #1034147 filed against

ruby-regexp-parser.

-

Vagrant Cascadian:

- #1033954, #1033955 and #1033957 filed against

pike8.0.

- #1033958 and #1033959 filed against

binutils.

- #1034129 filed against

lomiri-action-api.

- #1034199 and #1034200 filed against

lomiri.

- #1034327 filed against

nmodl.

- #1034423 filed against

php8.2.

- #1034431 filed against

qemu.

- #1034499 filed against

twisted.

- #1034740 filed against

boost1.74.

- #1034892 filed against

php8.2.

- #1035324 filed against

shaderc.

- #1035329 and #1035331 filed against

jackd2.

diffoscope development

diffoscope version

diffoscope version 241 was uploaded to Debian unstable by Chris Lamb. It included contributions already covered in previous months as well a change by Chris Lamb to add a missing raise statement that was accidentally dropped in a previous commit. [ ]

Testing framework

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

-

Holger Levsen:

- Significant work on a new Documented Jenkins Maintenance (djm) script to support logged maintenance of nodes, etc. [ ][ ][ ][ ][ ][ ]

- Add the new APT repo url for Jenkins itself with a new signing key. [ ][ ]

- In the Jenkins shell monitor, allow 40 GiB of files for diffoscope for the Debian experimental distribution as Debian is frozen around the release at the moment. [ ]

- Updated Arch Linux testing to cleanup leftover files left in

/tmp/archlinux-ci/ after three days. [ ][ ][ ]

- Mark a number of nodes hosted by Oregon State University Open Source Lab (OSUOSL) as online and offline. [ ][ ][ ]

- Update the node health checks to detect failures to end

schroot sessions. [ ]

- Filter out another duplicate contributor from the contributor statistics. [ ]

-

Mattia Rizzolo:

- Code changes to properly archive the

stretch Debian distribution. [ ][ ][ ][ ]

- Introduce the archived suites configuration option. [ ]][ ]

- Fix the KGB bot configuration to support

pyyaml 6.0 as present in Debian bookworm. [ ]

If you are interested in contributing to the Reproducible Builds project, please visit our Contribute page on our website. However, you can get in touch with us via:

-

IRC:

#reproducible-builds on irc.oftc.net.

-

Twitter: @ReproBuilds

-

Mailing list:

rb-general@lists.reproducible-builds.org

build-essential package set, which was inspired by how close we are to making the Debian build-essential set reproducible and how important that set of packages are in general . Vagrant mentioned that: I have some progress, some hope, and I daresay, some fears . [ ]

dinstalls that is to say, the snapshot service is not capturing 100% of all of historical states of the Debian archive. This is relevant to reproducibility because without the availability historical versions, it is becomes impossible to repeat a build at a future date in order to correlate checksums. .

build_path_in_line_annotations_added_by_ruby_ragel toolchain issue. [ ]

-

Bernhard M. Wiedemann:

ghc(workaround a parallelism-related issue)

-

Jan Zerebecki:

ghc(report a parallelism-related issue)

-

Chris Lamb:

- #1034147 filed against

ruby-regexp-parser.

- #1034147 filed against

-

Vagrant Cascadian:

- #1033954, #1033955 and #1033957 filed against

pike8.0. - #1033958 and #1033959 filed against

binutils. - #1034129 filed against

lomiri-action-api. - #1034199 and #1034200 filed against

lomiri. - #1034327 filed against

nmodl. - #1034423 filed against

php8.2. - #1034431 filed against

qemu. - #1034499 filed against

twisted. - #1034740 filed against

boost1.74. - #1034892 filed against

php8.2. - #1035324 filed against

shaderc. - #1035329 and #1035331 filed against

jackd2.

- #1033954, #1033955 and #1033957 filed against

diffoscope development

diffoscope version

diffoscope version 241 was uploaded to Debian unstable by Chris Lamb. It included contributions already covered in previous months as well a change by Chris Lamb to add a missing raise statement that was accidentally dropped in a previous commit. [ ]

Testing framework

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

-

Holger Levsen:

- Significant work on a new Documented Jenkins Maintenance (djm) script to support logged maintenance of nodes, etc. [ ][ ][ ][ ][ ][ ]

- Add the new APT repo url for Jenkins itself with a new signing key. [ ][ ]

- In the Jenkins shell monitor, allow 40 GiB of files for diffoscope for the Debian experimental distribution as Debian is frozen around the release at the moment. [ ]

- Updated Arch Linux testing to cleanup leftover files left in

/tmp/archlinux-ci/ after three days. [ ][ ][ ]

- Mark a number of nodes hosted by Oregon State University Open Source Lab (OSUOSL) as online and offline. [ ][ ][ ]

- Update the node health checks to detect failures to end

schroot sessions. [ ]

- Filter out another duplicate contributor from the contributor statistics. [ ]

-

Mattia Rizzolo:

- Code changes to properly archive the

stretch Debian distribution. [ ][ ][ ][ ]

- Introduce the archived suites configuration option. [ ]][ ]

- Fix the KGB bot configuration to support

pyyaml 6.0 as present in Debian bookworm. [ ]

If you are interested in contributing to the Reproducible Builds project, please visit our Contribute page on our website. However, you can get in touch with us via:

-

IRC:

#reproducible-builds on irc.oftc.net.

-

Twitter: @ReproBuilds

-

Mailing list:

rb-general@lists.reproducible-builds.org

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

The Reproducible Builds project operates a comprehensive testing framework (available at tests.reproducible-builds.org) in order to check packages and other artifacts for reproducibility. In April, a number of changes were made, including:

-

Holger Levsen:

- Significant work on a new Documented Jenkins Maintenance (djm) script to support logged maintenance of nodes, etc. [ ][ ][ ][ ][ ][ ]

- Add the new APT repo url for Jenkins itself with a new signing key. [ ][ ]

- In the Jenkins shell monitor, allow 40 GiB of files for diffoscope for the Debian experimental distribution as Debian is frozen around the release at the moment. [ ]

- Updated Arch Linux testing to cleanup leftover files left in

/tmp/archlinux-ci/after three days. [ ][ ][ ] - Mark a number of nodes hosted by Oregon State University Open Source Lab (OSUOSL) as online and offline. [ ][ ][ ]

- Update the node health checks to detect failures to end

schrootsessions. [ ] - Filter out another duplicate contributor from the contributor statistics. [ ]

-

Mattia Rizzolo:

- Code changes to properly archive the

stretchDebian distribution. [ ][ ][ ][ ] - Introduce the archived suites configuration option. [ ]][ ]

- Fix the KGB bot configuration to support

pyyaml6.0 as present in Debian bookworm. [ ]

- Code changes to properly archive the

If you are interested in contributing to the Reproducible Builds project, please visit our Contribute page on our website. However, you can get in touch with us via:

-

IRC:

#reproducible-buildsonirc.oftc.net. - Twitter: @ReproBuilds

-

Mailing list:

rb-general@lists.reproducible-builds.org

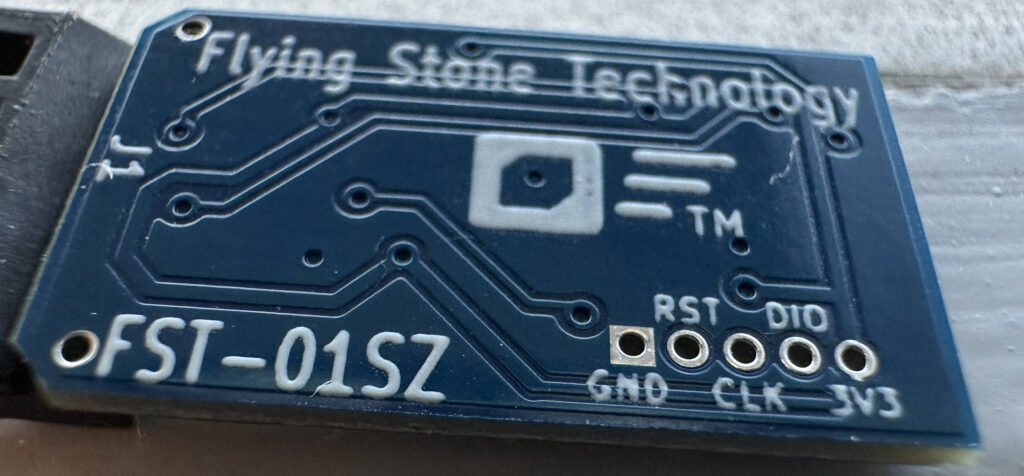

I acquired some unusual input devices to experiment with, like a CNC control

panel and a bluetooth pedal page turner.

These identify and behave like a keyboard, sending nice and simple keystrokes,

and can be accessed with no drivers or other special software. However, their

keystrokes appear together with keystrokes from normal keyboards, which is the

expected default when plugging in a keyboard, but not what I want in this case.

I'd also like them to be readable via evdev and accessible by my own user.

Here's the udev rule I cooked up to handle this use case:

I acquired some unusual input devices to experiment with, like a CNC control

panel and a bluetooth pedal page turner.

These identify and behave like a keyboard, sending nice and simple keystrokes,

and can be accessed with no drivers or other special software. However, their

keystrokes appear together with keystrokes from normal keyboards, which is the

expected default when plugging in a keyboard, but not what I want in this case.

I'd also like them to be readable via evdev and accessible by my own user.

Here's the udev rule I cooked up to handle this use case:

Way back at

Way back at  I wrote the

I wrote the  Wrong sort of shim, but canned language bindings would be handy

Wrong sort of shim, but canned language bindings would be handy

This is what I imagine it looks like inside these libraries

This is what I imagine it looks like inside these libraries

After

After

My credit card and bank account rarely agree on the date for when I pay it off

My credit card and bank account rarely agree on the date for when I pay it off